By Agnieszka Gautier

It only takes a small uptick in temperature for Earth’s frozen regions, known as the cryosphere, to go from freezing to thawing. For decades, scientists have used lidar instruments to measure changes in Arctic sea ice, the Greenland Ice Sheet, and glaciers. These instruments send laser pulses to Earth’s surface, measuring the time it takes for the signal to return to create high-resolution maps of surface elevation.

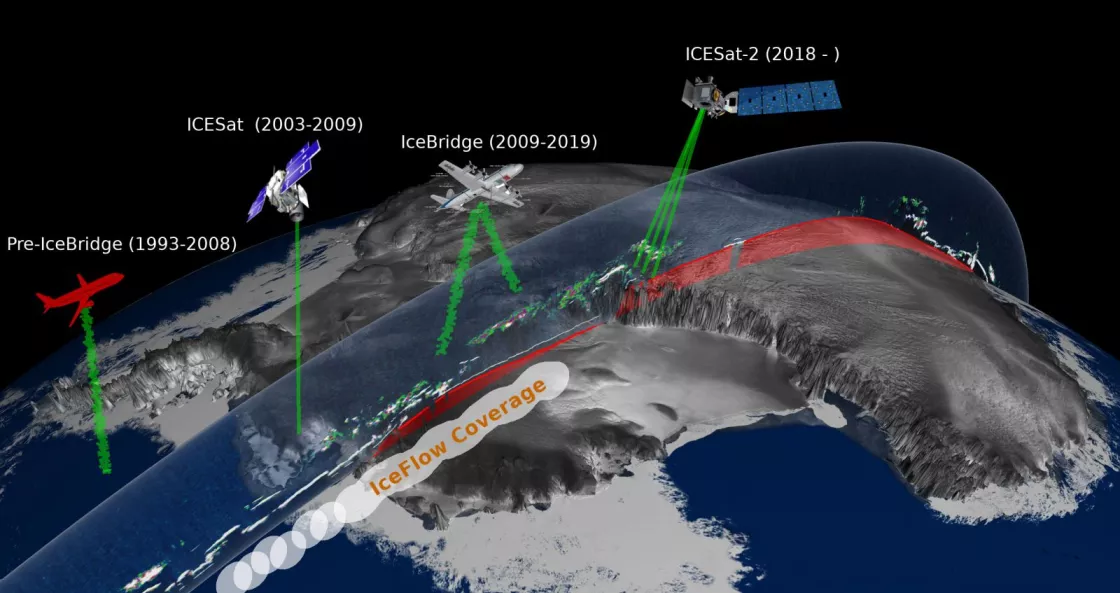

Since 1993, NASA has deployed lidar—first via aircraft, then via satellite—to collect altimetry data showing how much these icy regions have changed. But over time, evolving instruments and data formats made it challenging to compare measurements across missions.

To solve this problem, the NSIDC Distributed Active Archive Center (DAAC), with NASA support, developed IceFlow, an interactive tool that standardized and harmonized over 25 years of lidar data. From 1993 to recent years, IceFlow allowed scientists to access elevation data from campaigns like ICESat, ICESat-2, and Operation IceBridge in a consistent format, dramatically reducing the technical burden for researchers.

A transformative approach

IceFlow addressed a major pain point: different data formats spanning decades. “Every time you make changes, you learn better ways of representing the data, leaving you with a trail of different formats,” said Steve Tanner, a data manager for the National Snow and Ice Data Center (NSIDC) Distributed Data Active Archive (DAAC). IceFlow solved this by creating a common structure for the data and integrating it into a user-friendly interface using Jupyter Notebooks—a widely adopted data science platform.

Beyond data formatting, IceFlow tackled challenges of coordinating accuracy over time. Shifting tectonic plates and updates to Earth’s reference frame (ITRF) had to be reconciled to ensure accurate, meaningful comparisons. IceFlow incorporated geophysical corrections and coordinate standardization, enabling high-precision research.

Data exploration made easy

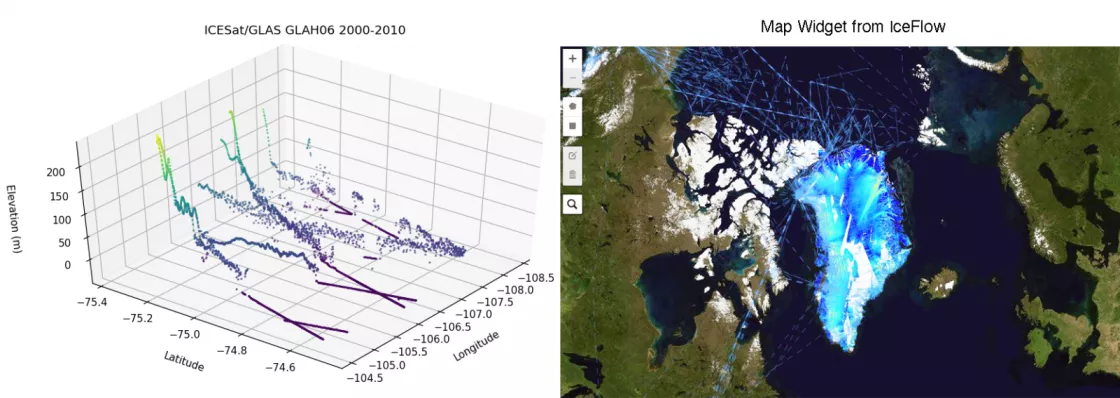

The tool allowed users to visualize, select, and subset data without downloading massive files. “The data are big,” Tanner noted. “We’re talking about trillions of data points in the case of ICESat-2.” Features like spatial subsetting and cloud-hosted previews let users focus only on areas of interest—like a single glacier—without pulling in entire regional datasets.

IceFlow even helped address issues of data size and granularity. “We’re trying to lower the barrier for scientists,” said Luis Lopez, software engineer for the project. “We don’t want them to spend hours just trying to understand the data. Let’s get them started faster so they can do their research.”

Sunsetting the tool

As of June 11, 2025, IceFlow has been decommissioned due to technical dependencies as NSIDC DAAC transitions its data archive and backend infrastructure to NASA Earthdata Cloud. While the original tool is no longer supported, researchers can continue to perform the same data transformation and harmonization methods using the new standalone IceFlow Python library, which provides a “DIY” option for those looking to harmonize lidar data on their own.

Legacy and impact

Though the tool is no longer active, IceFlow’s impact remains significant. It demonstrated the importance of data harmonization and helped hundreds of researchers accelerate their work. It also laid the foundation for more streamlined, scalable, and researcher-centered tools for Earth science data access.

As lidar campaigns and satellite missions continue, the lessons of IceFlow will continue to shape how we make complex Earth data accessible to the science community.

Access data through the NSIDC DAAC

NASA's NSIDC DAAC manages, distributes, and supports a variety of cryospheric and climate-related datasets as one of the discipline-specific Earth Science Data and Information System (ESDIS) data centers within NASA's Earth Science Data Systems (ESDS) Program. User Resources include data documentation, help articles, data tools, training, and on-demand user support. Learn more about NSIDC DAAC services.